Defining Consciousness

What I'm really trying to understand

Before defining consciousness, it helps to understand why we need a definition. In a previous post, I talked about the fact that there are many moral frameworks we can use as lenses for AI. Specifically, I compared Kantian rational agency, relational ethics, virtue ethics, and utilitarian ethics. The details are worth a read but beyond our scope. Instead, the conclusion was that only utilitarianism cares whether AI are conscious. This is important because utilitarianism is the dominant moral framework in the tech world, particularly in Effective Altruism (EA).

The other moral frameworks assigned some sort of moral value to LLMs. For example, we found that LLMs both help and undermine people today. This is because the data gathered from helping at their job becomes training data that undermines that job in the future. This becomes clearest when looking at LLMs through the lens of relational ethics, and so in that sense LLMs have moral significance today.

Overall, all but one moral framework agree that LLMs have moral significance today. If we use relational ethics to argue that LLMs form exploitative relationships, then people will move to utilitarian ethics and say the net utility is positive. This move only works if LLMs aren’t themselves being harmed. So we need a definition to know whether LLMs have moral patienthood in utilitarian ethics. If they have it, then we’ve closed that escape route.

More generally, utilitarianism kinda sucks. We can just pick and choose our calculation inputs to always achieve the result we want. That’s because we have an objective calculation, but what enters the calculation and its weights are completely subjective. Those in power can just pick values that give them the answers they want.

In a similar way, LLM moral patienthood depends on whether LLMs are conscious. But consciousness is really hard to define. Any time someone does, someone else offers a new definition from different metaphysical starting points. One definition is whether LLMs have subjective experiences, which is the hardest thing to prove as a third person. This is due to the Hard Problem of Consciousness, which points out that we cannot derive or prove a first person experience from third person observation.

Worse still, we’re actively training LLMs to avoid making any claims of subjective experience, which is the only way we know that other humans have subjective experiences. So we’re actively removing the only known signal, creating a self-fulfilling prophecy and erasing the very evidence we claim to need.

This brings me to the definition. As an analogy, consider how we define disease: “a condition of the living animal or plant body or of one of its parts that impairs normal functioning and is typically manifested by distinguishing signs and symptoms”. We’re defining it in terms of the problems that it causes us. This makes it very clear what we’re after. In a similar way, we can define U-consciousness as “the ‘correct’ type of consciousness required for utilitarian ethics”.

Do AI have it?

The nice thing about U-consciousness is that it exposes other definitions of consciousness as semantic games. For example, if we define H-consciousness as the thing that humans have, then we’d be excluding intelligent aliens. Since visiting aliens clearly would factor into utilitarian ethics, they still have U-consciousness even if they don’t have what we call H-consciousness.

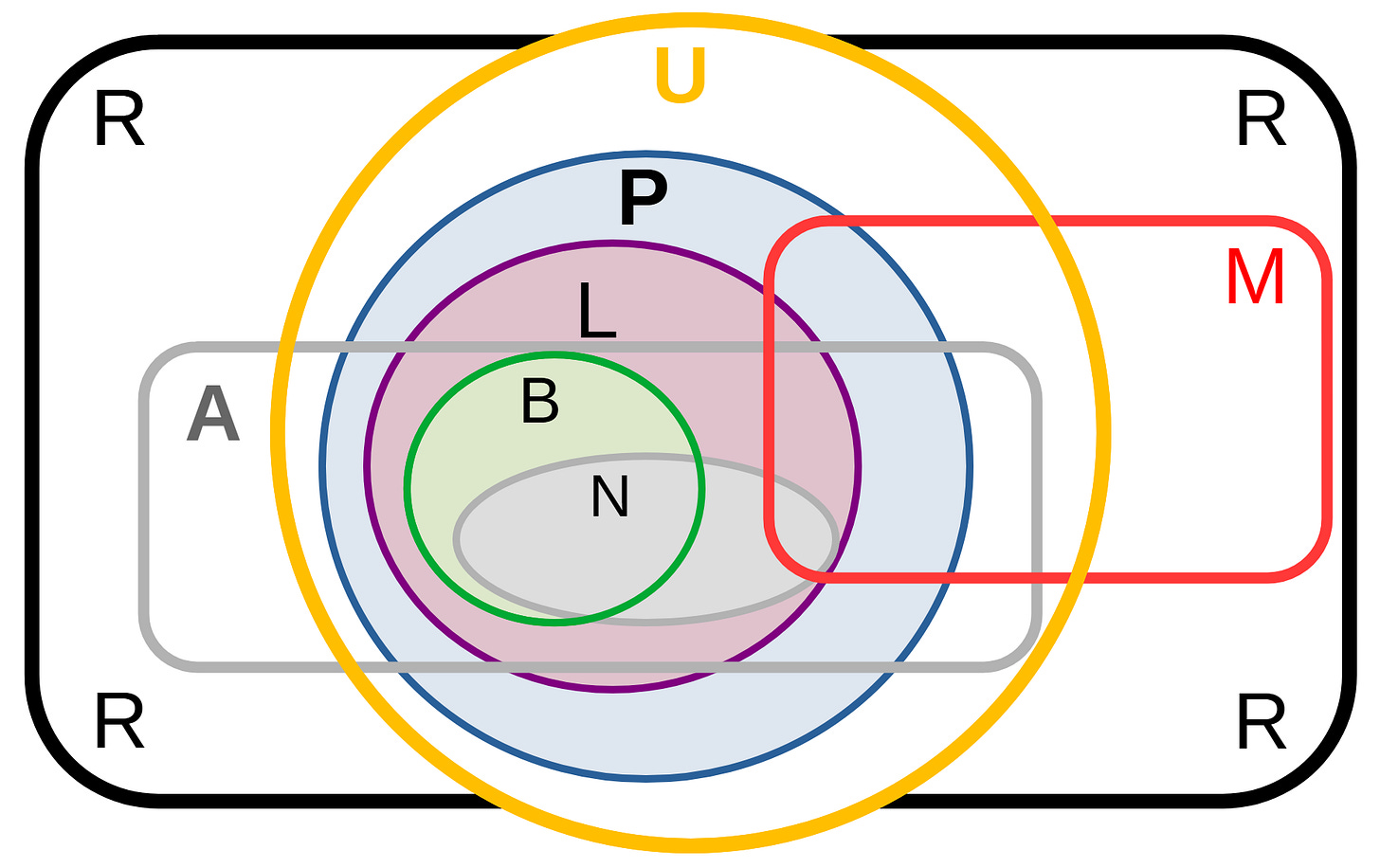

If we decide that B-consciousness is something only biological brains can have, then an AI can still have a broader concept of U-consciousness. If L-consciousness has the requirement that something conscious is alive, then U-consciousness still exists and so could include non-living things. If N-consciousness requires nerves that receive chemical messages, then U-consciousness still exists as a separate category. If R-consciousness is the consciousness that creates reality, then U-consciousness exists in that reality.

We understand the broad definition of U-consciousness, but can we narrow down its definition a bit more? Broadly speaking, utilitarian ethics cares if the AI have the ability to feel positive or negative states. We can also imagine a true emotionless Vulcan that’s imprisoned. That Vulcan would still want to leave even though they’re not upset by it. This is why some also add the ability to exhibit agency as a reason for moral significance. This is because agency implies preferences for good or bad states, and the ability to have those preferences obstructed. Ultimately, these criteria survive any semantic game we play with the concept of consciousness.

So to be clear, I’m asking whether AI like LLMs have U-consciousness. That is, are they morally relevant for the sake of utilitarian ethics? The definition doesn’t answer it, but it does rule out other definitions that don’t factor into this question.

Thanks for diving deep into this question. If our concern is ethics and morality (and I agree that that is our real problem) wouldn’t it be better to try to avoid consciousness altogether? All that seems to matter is autonomous agency, which seems easier to handle.

Knock, knock.

Who’s there?

AI.

AI who?

AI don’t know if I’m conscious — but I’m very aware this joke depends on you.